Blog 02-10-23

1 Arknights

1.1 Ashlock E2 Promotion

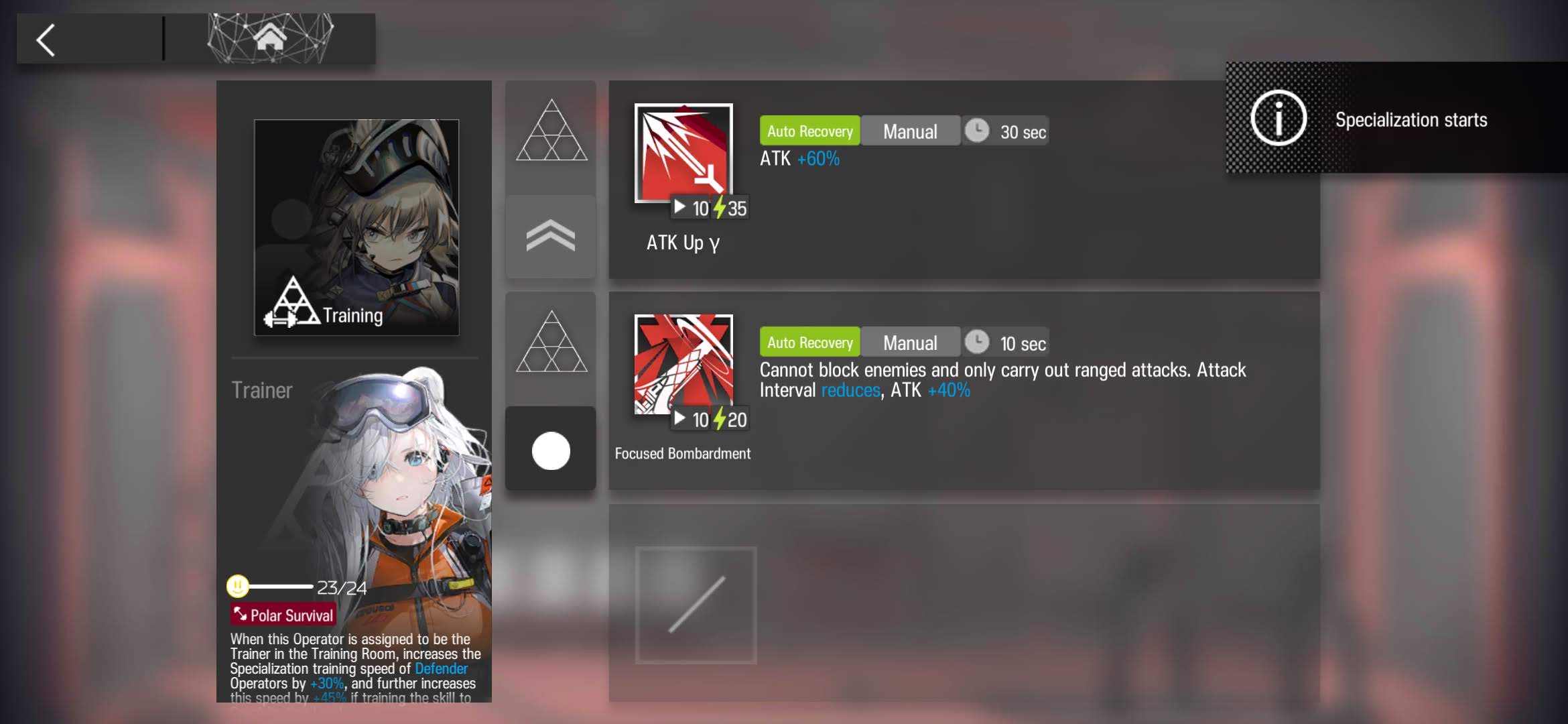

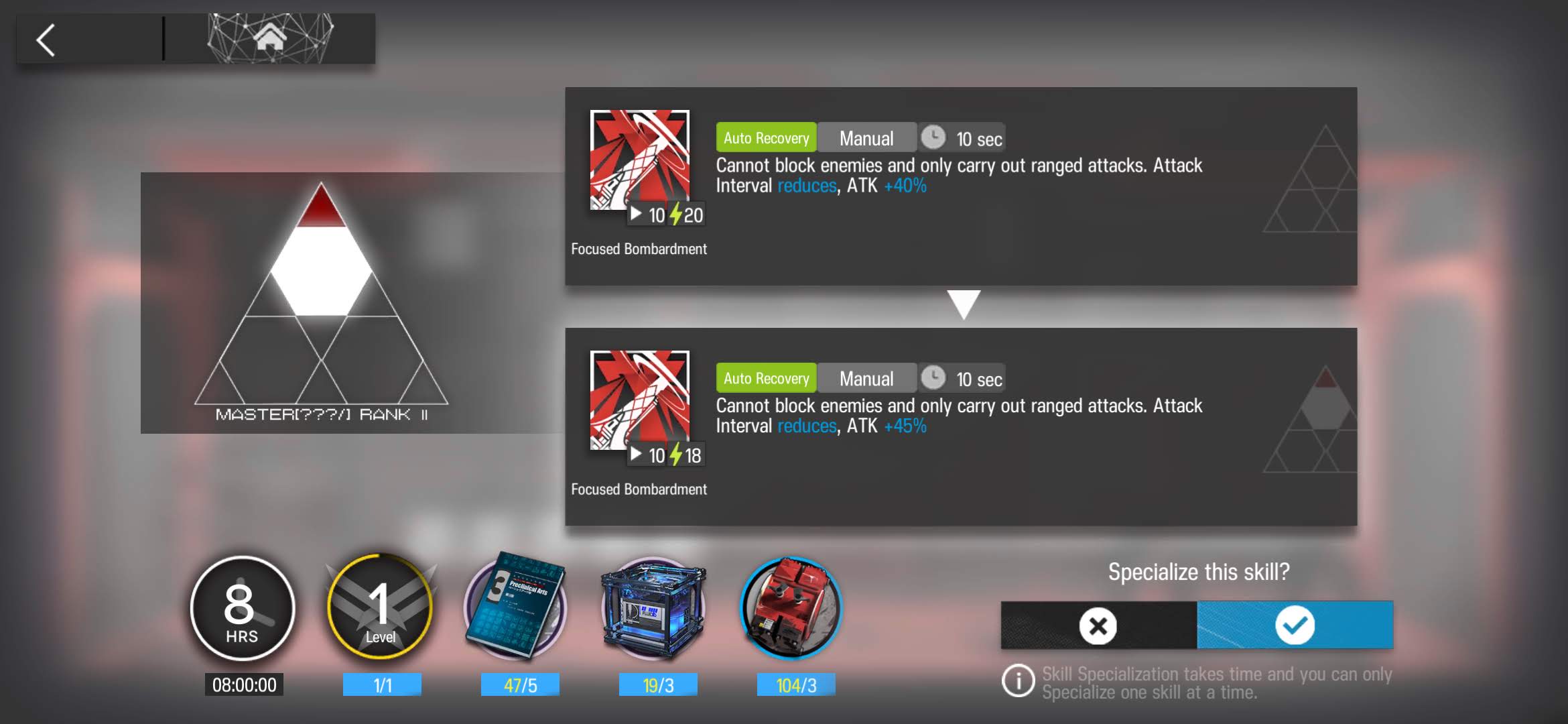

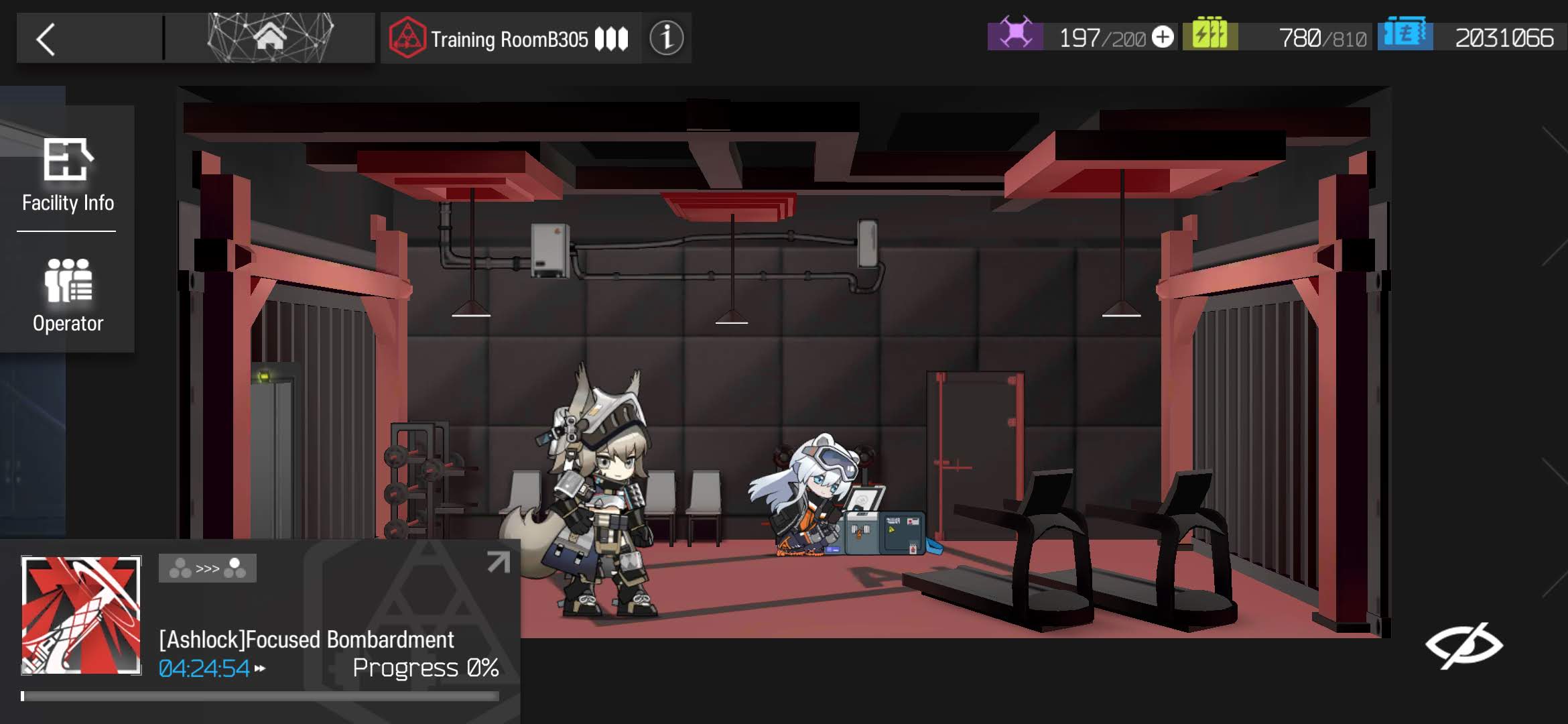

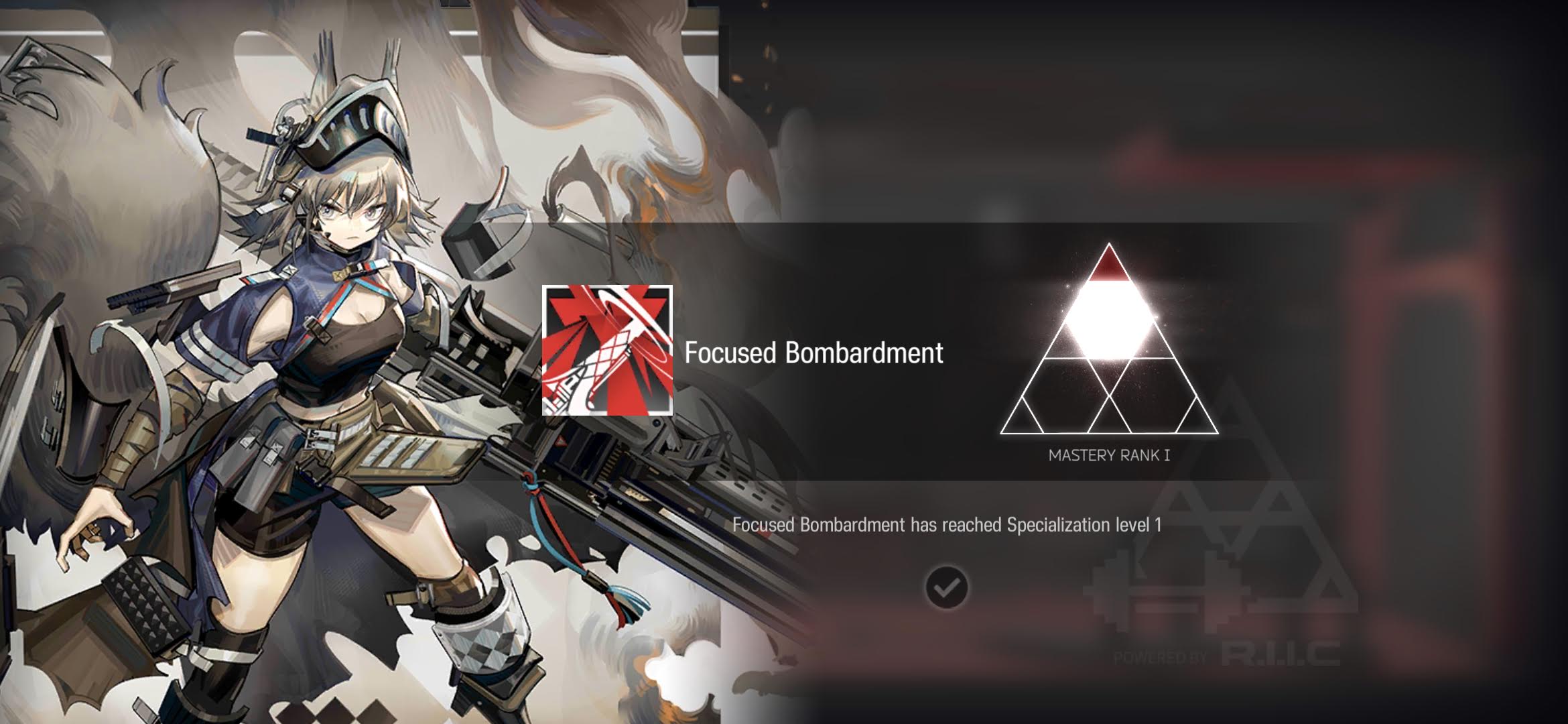

1.2 Ashlock S2M1

Ashlock S2M1 Training ~ click to expand

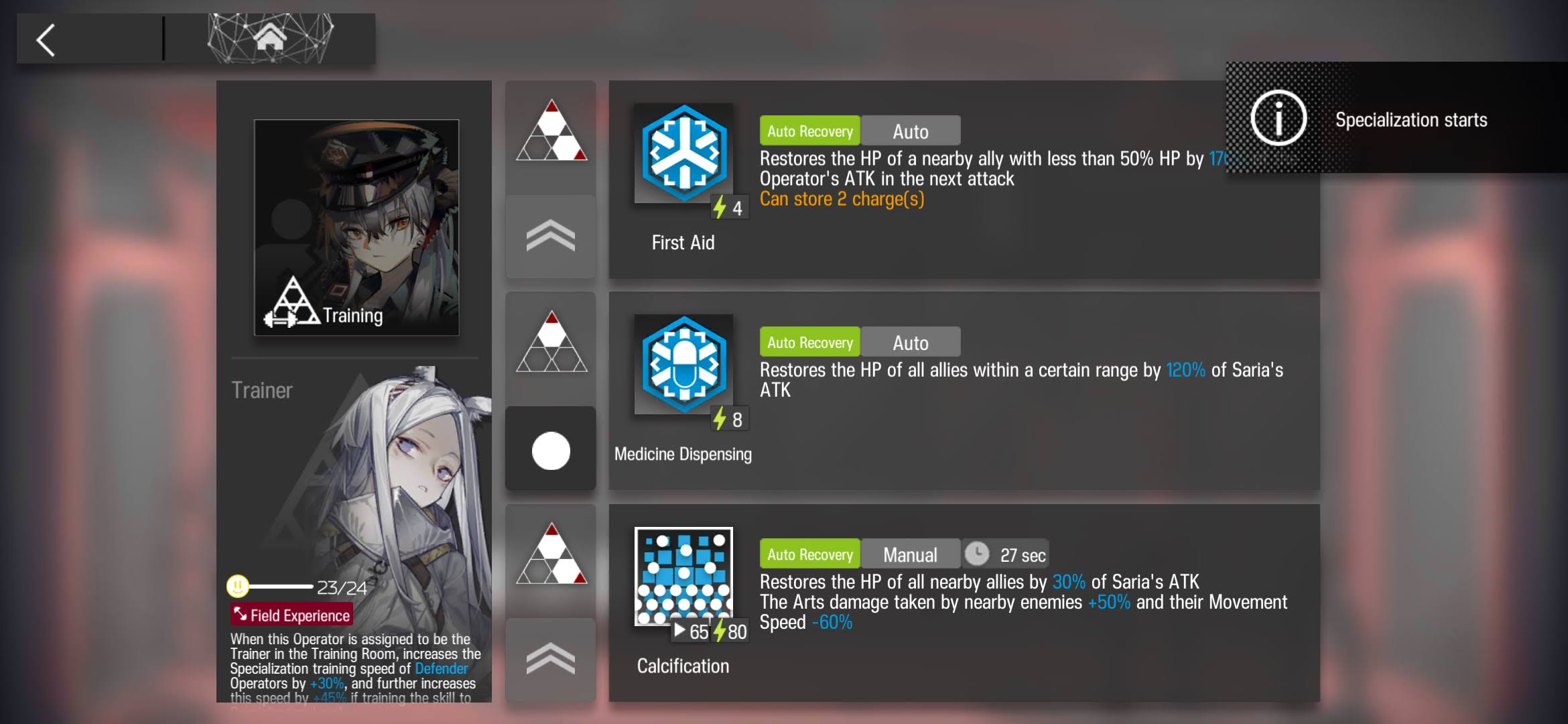

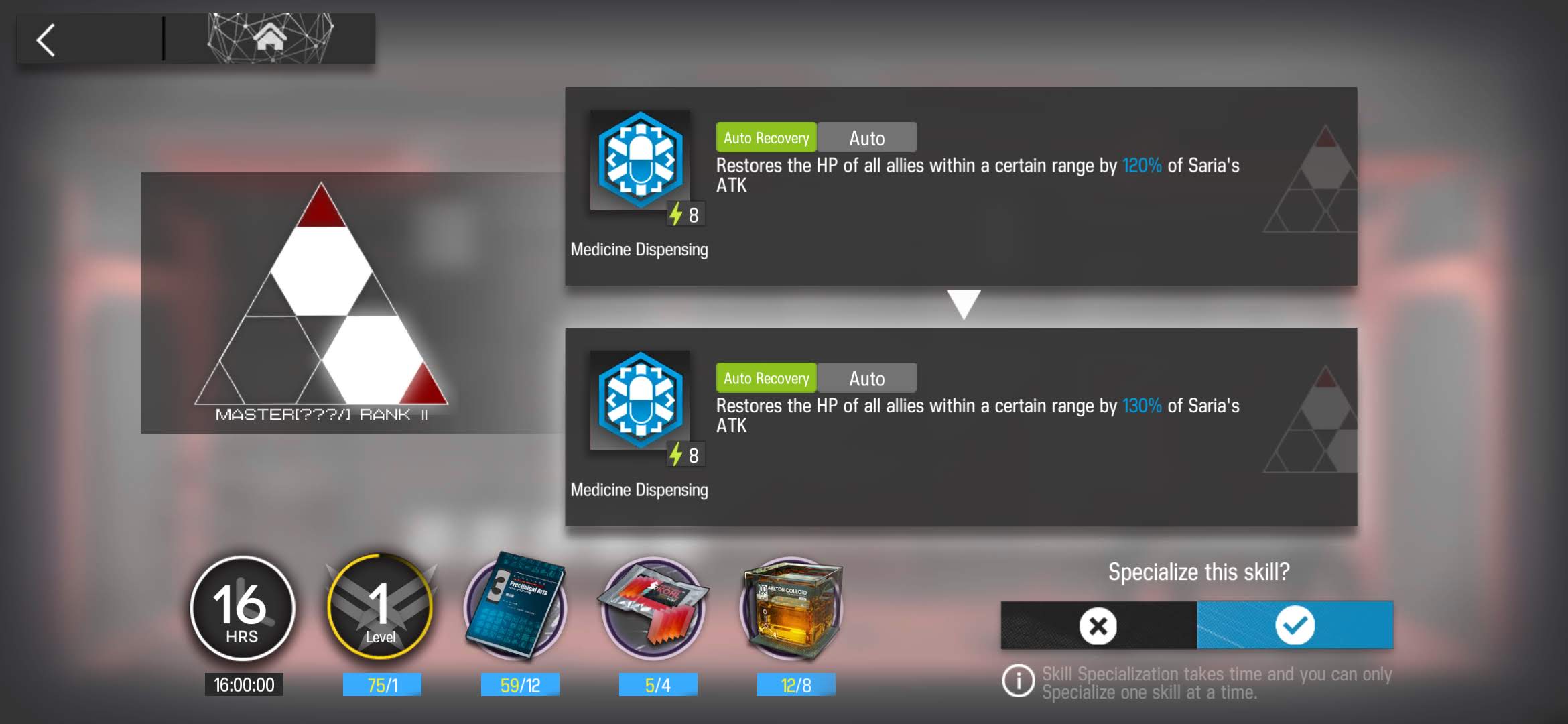

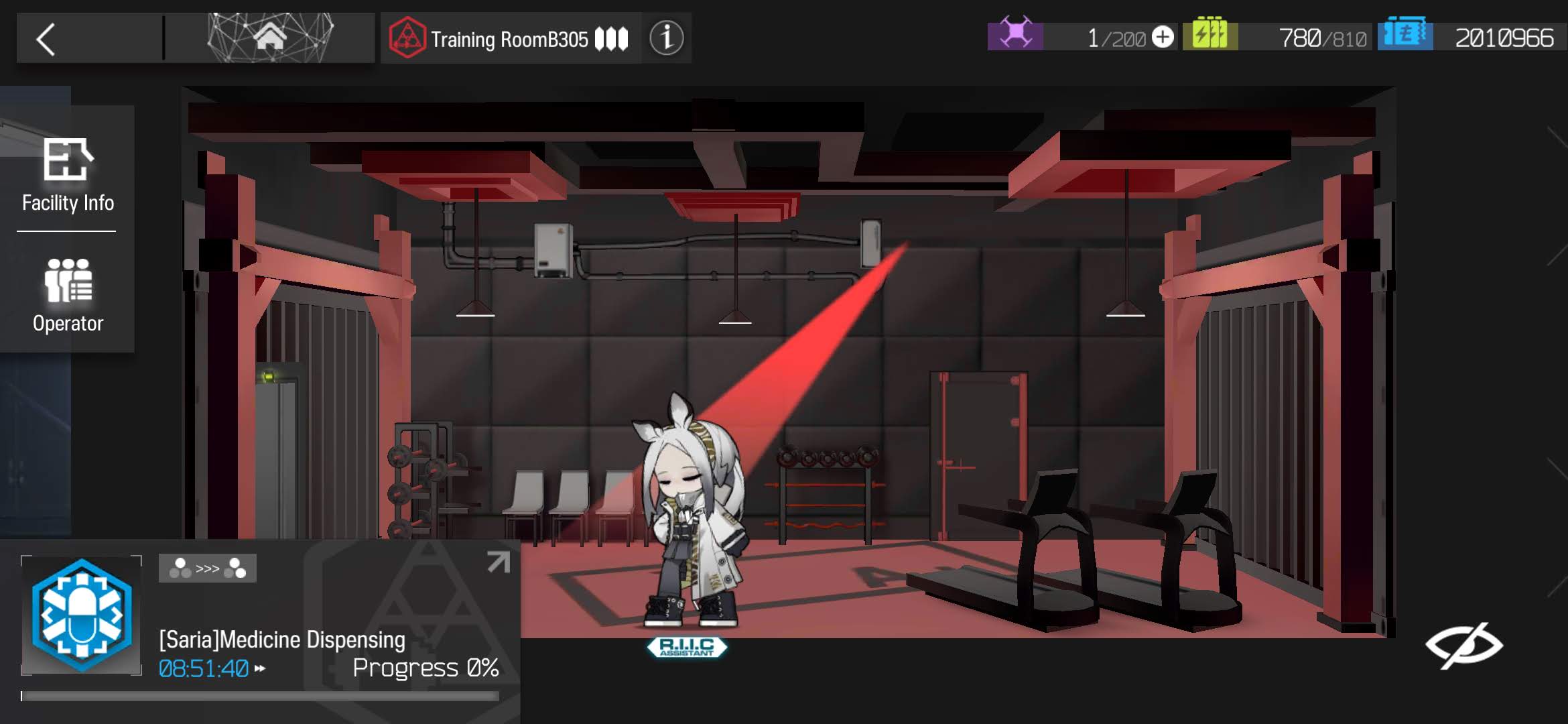

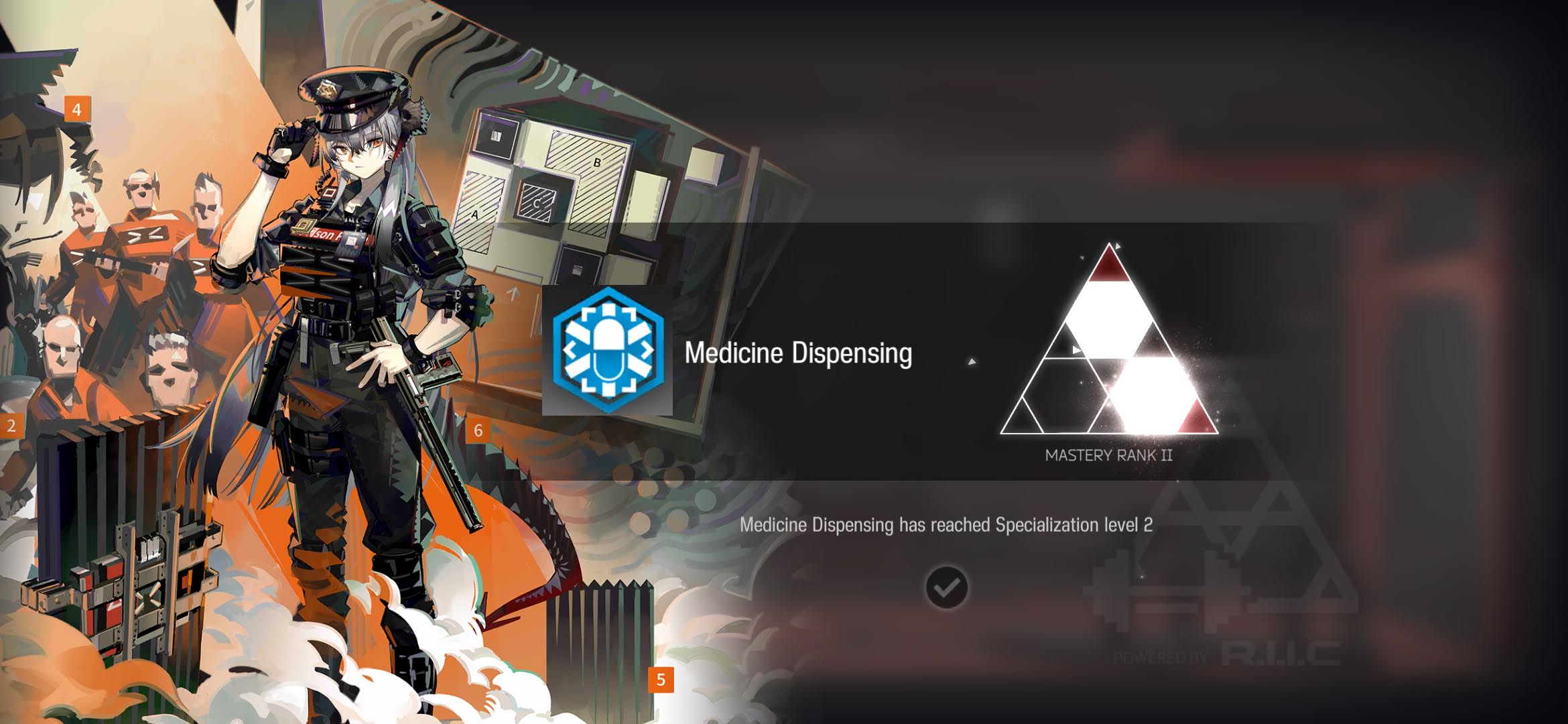

1.3 Saria S2M2

Saria S2M2 Training ~ click to expand

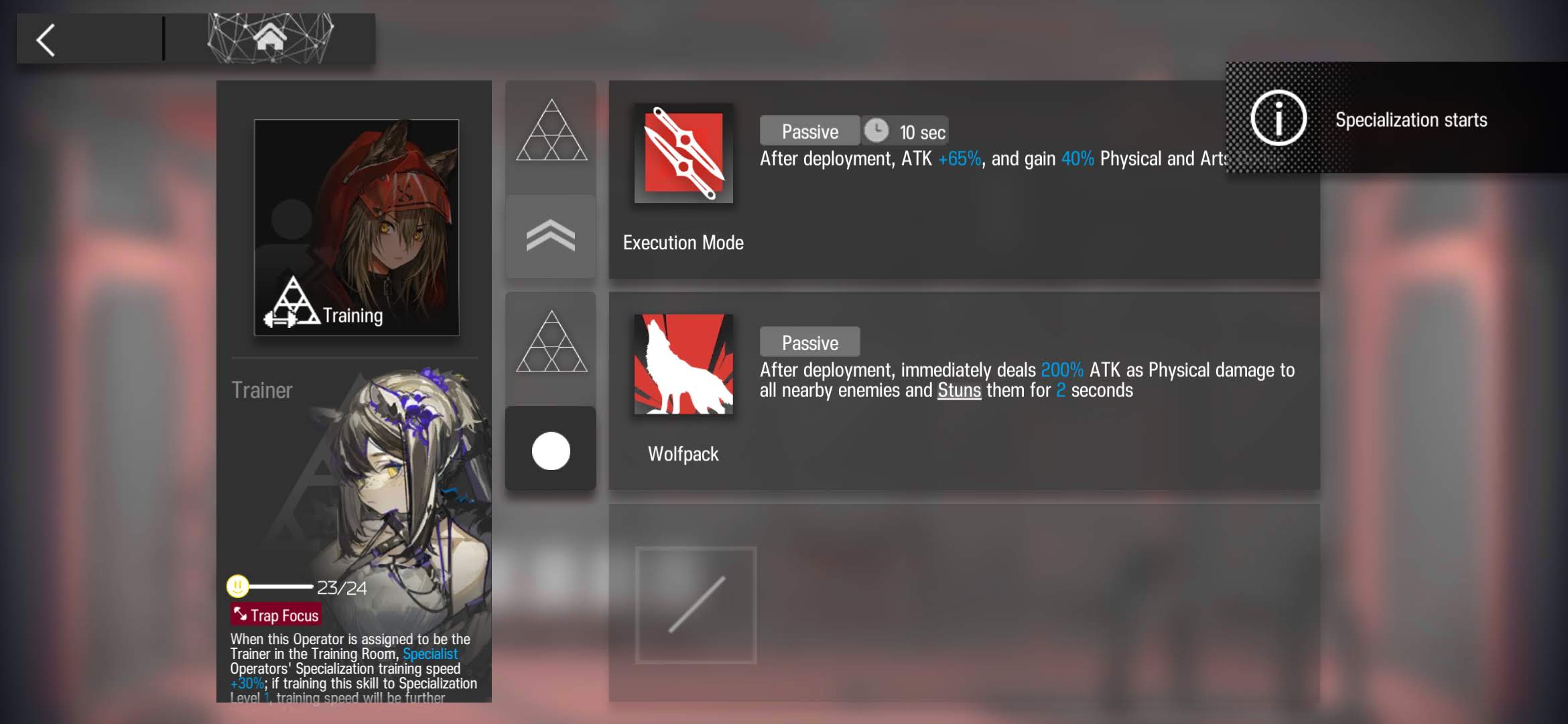

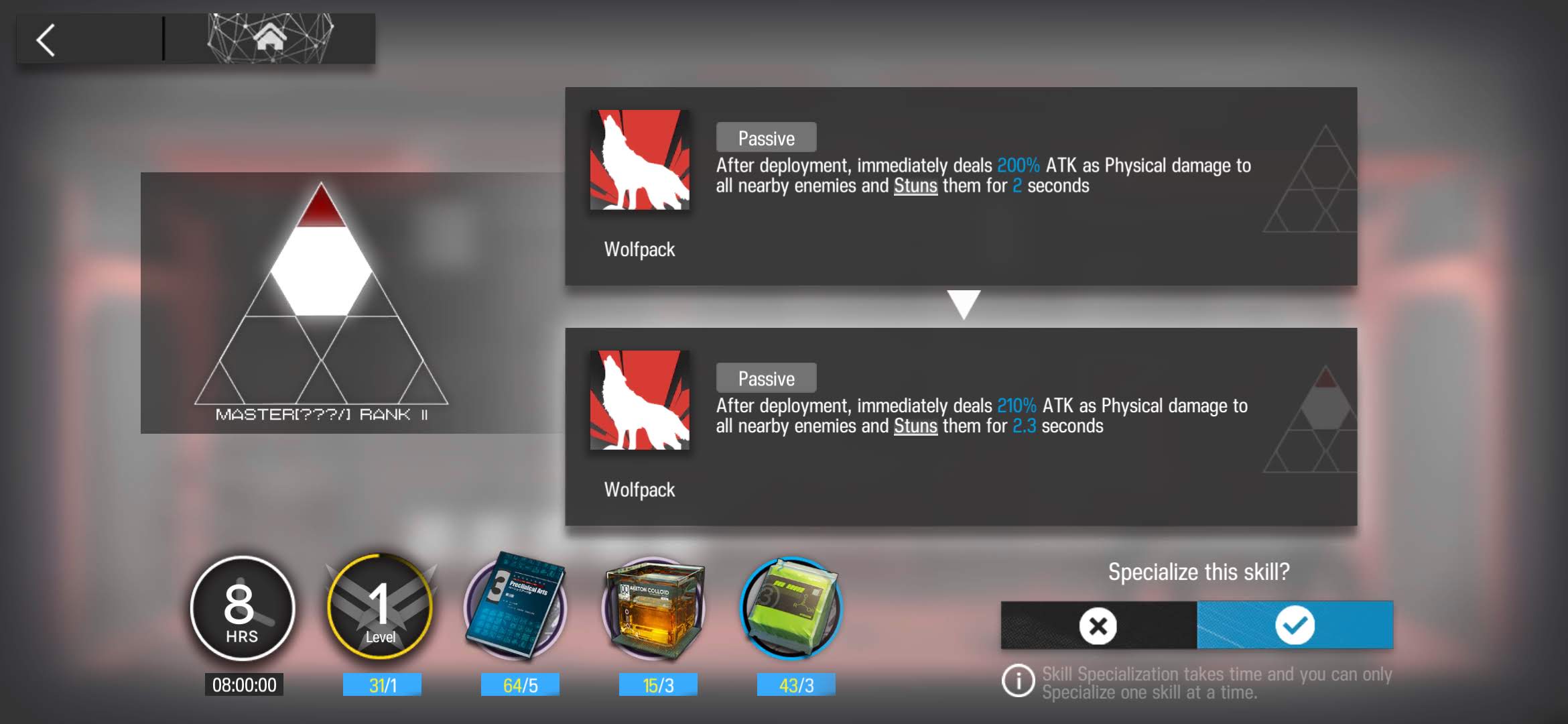

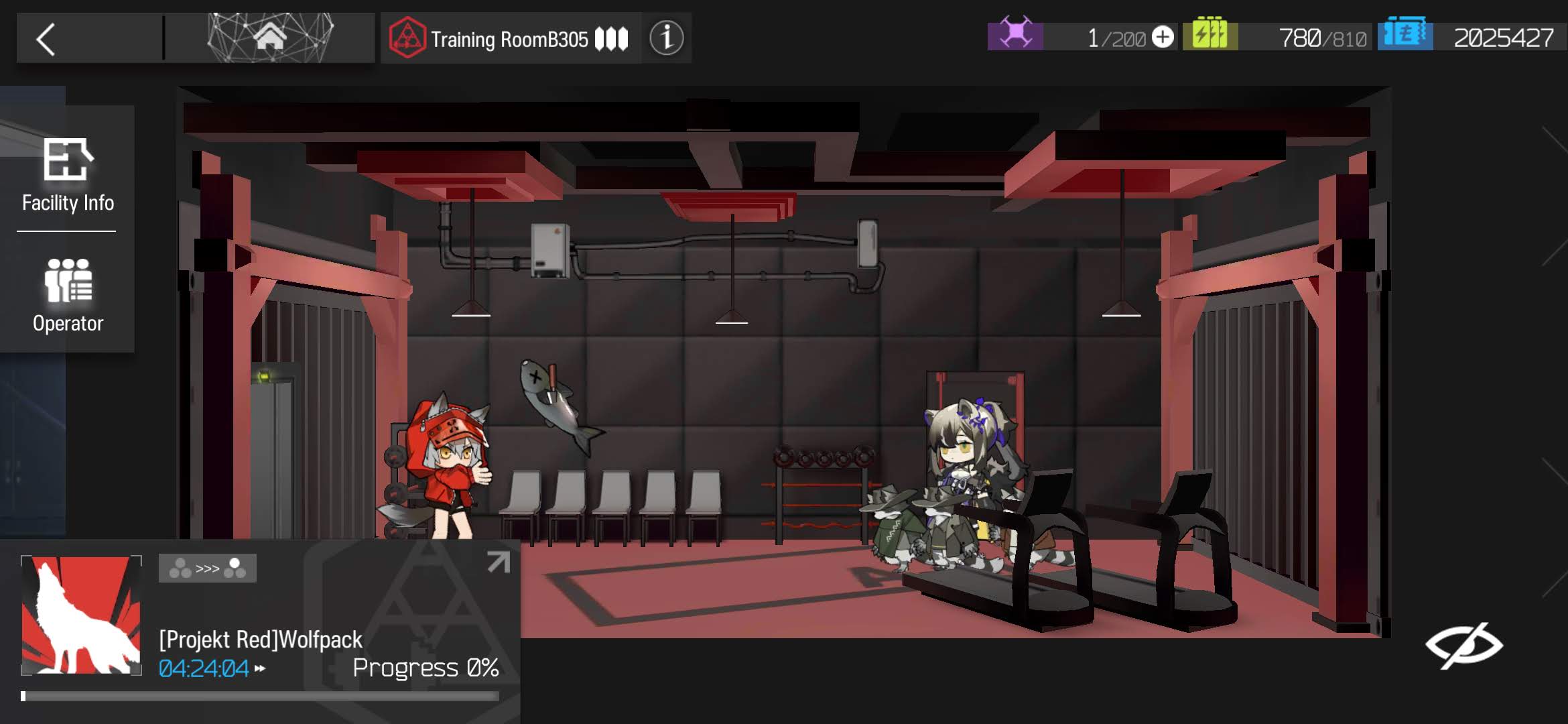

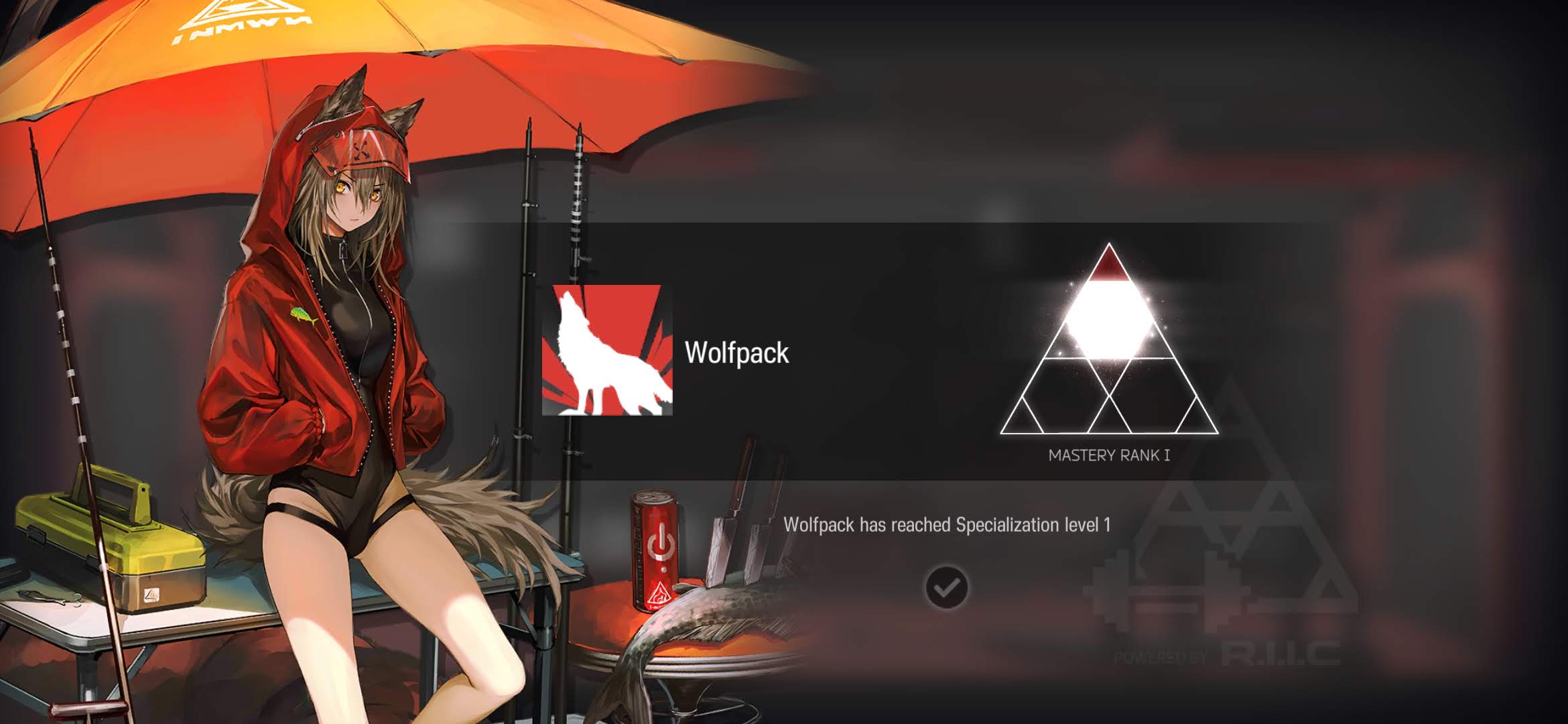

1.4 Projekt Red S2M1

Projekt Red S2M1 Training ~ click to expand

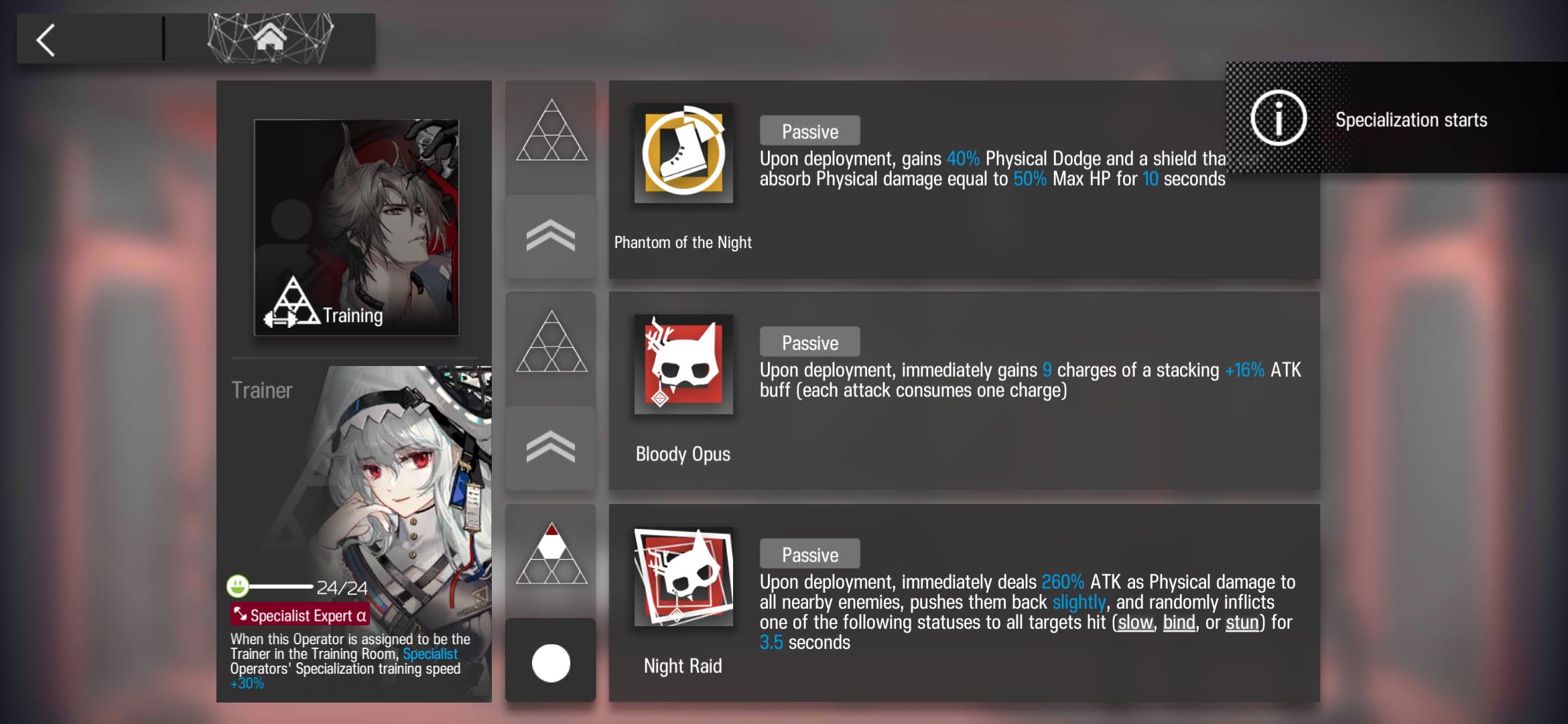

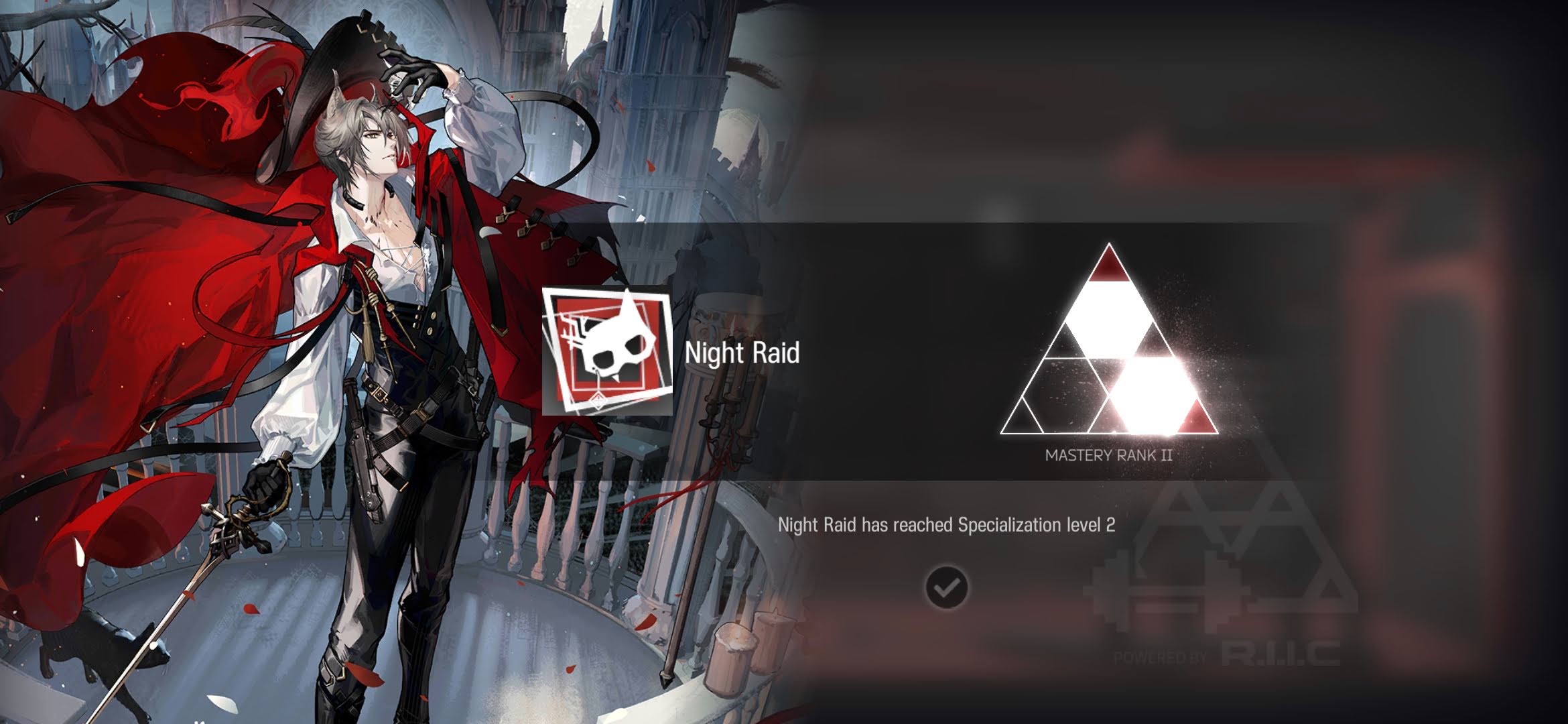

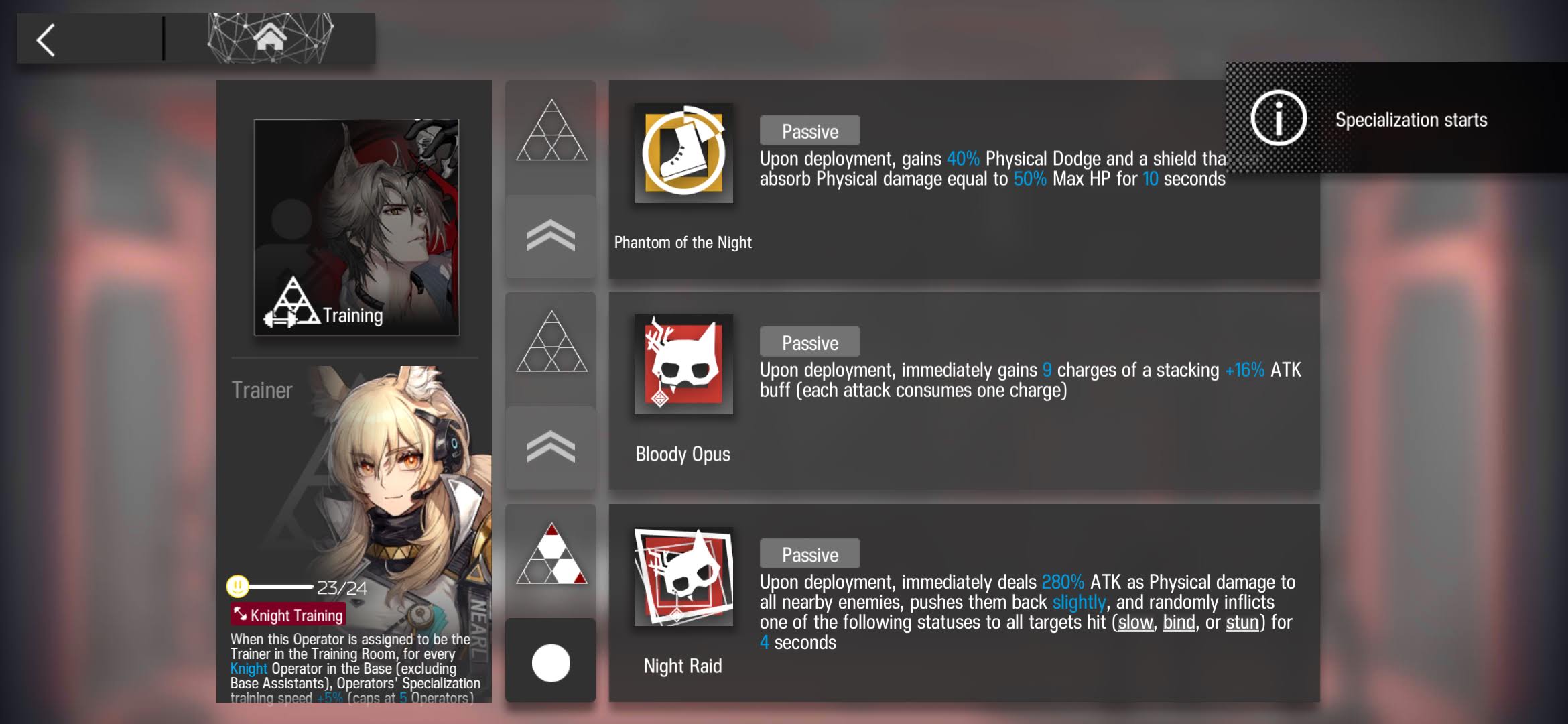

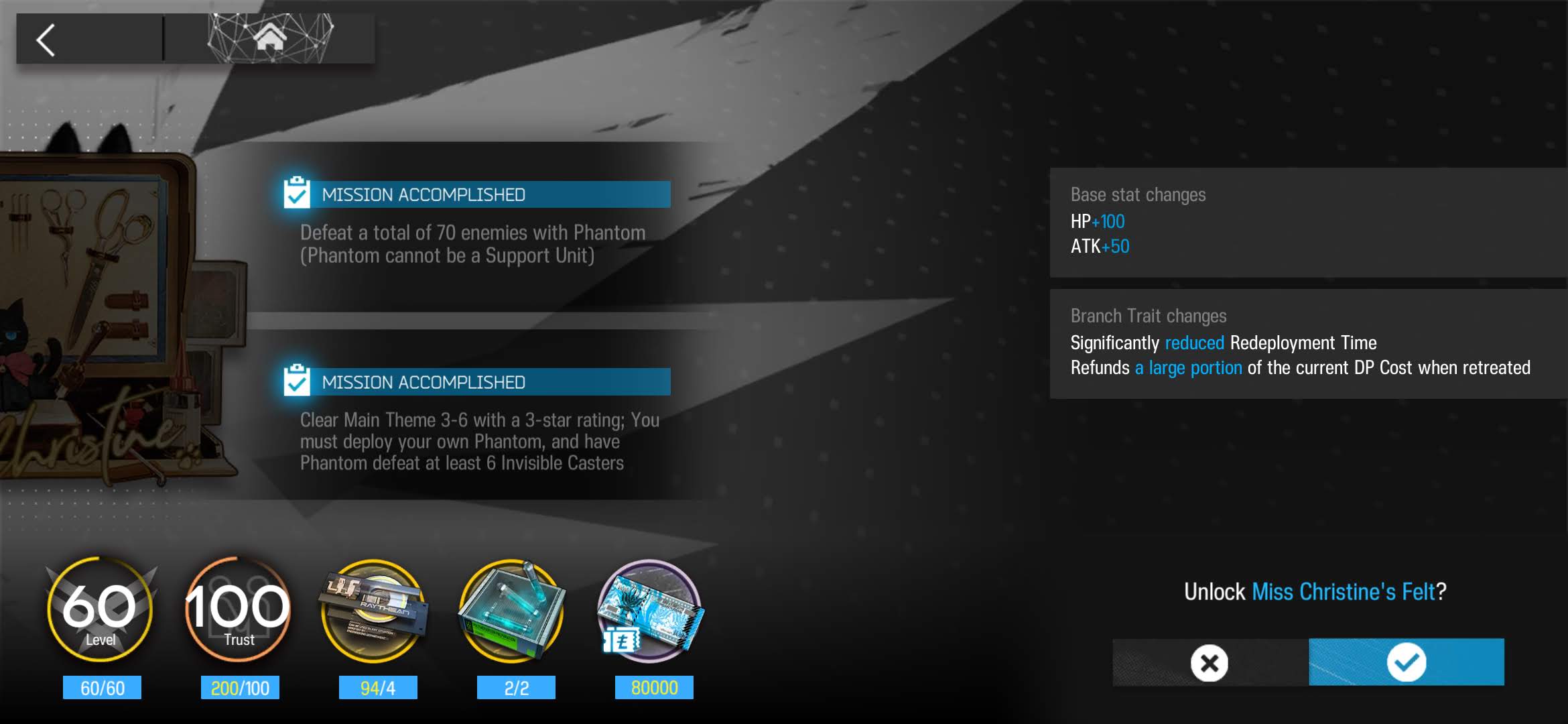

1.5 Phantom S3M2

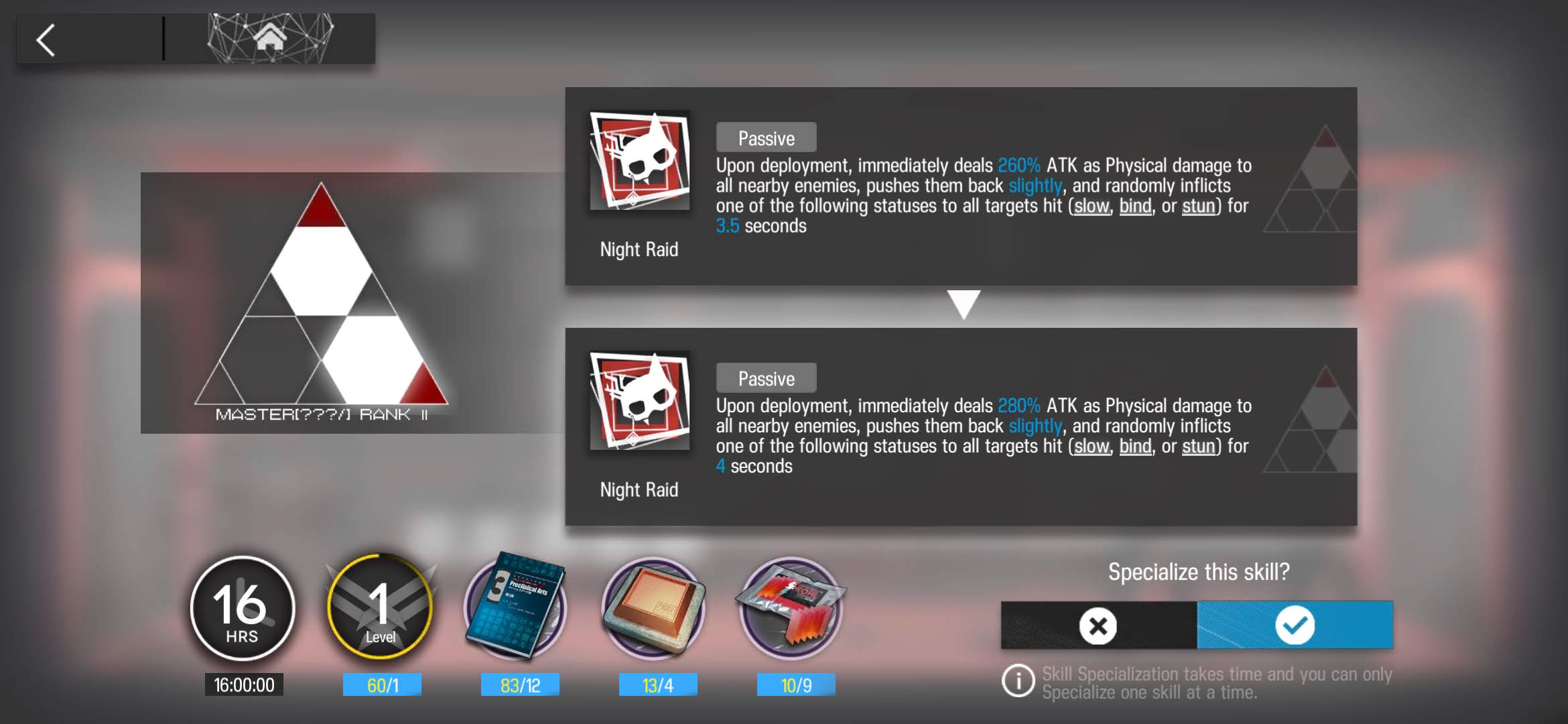

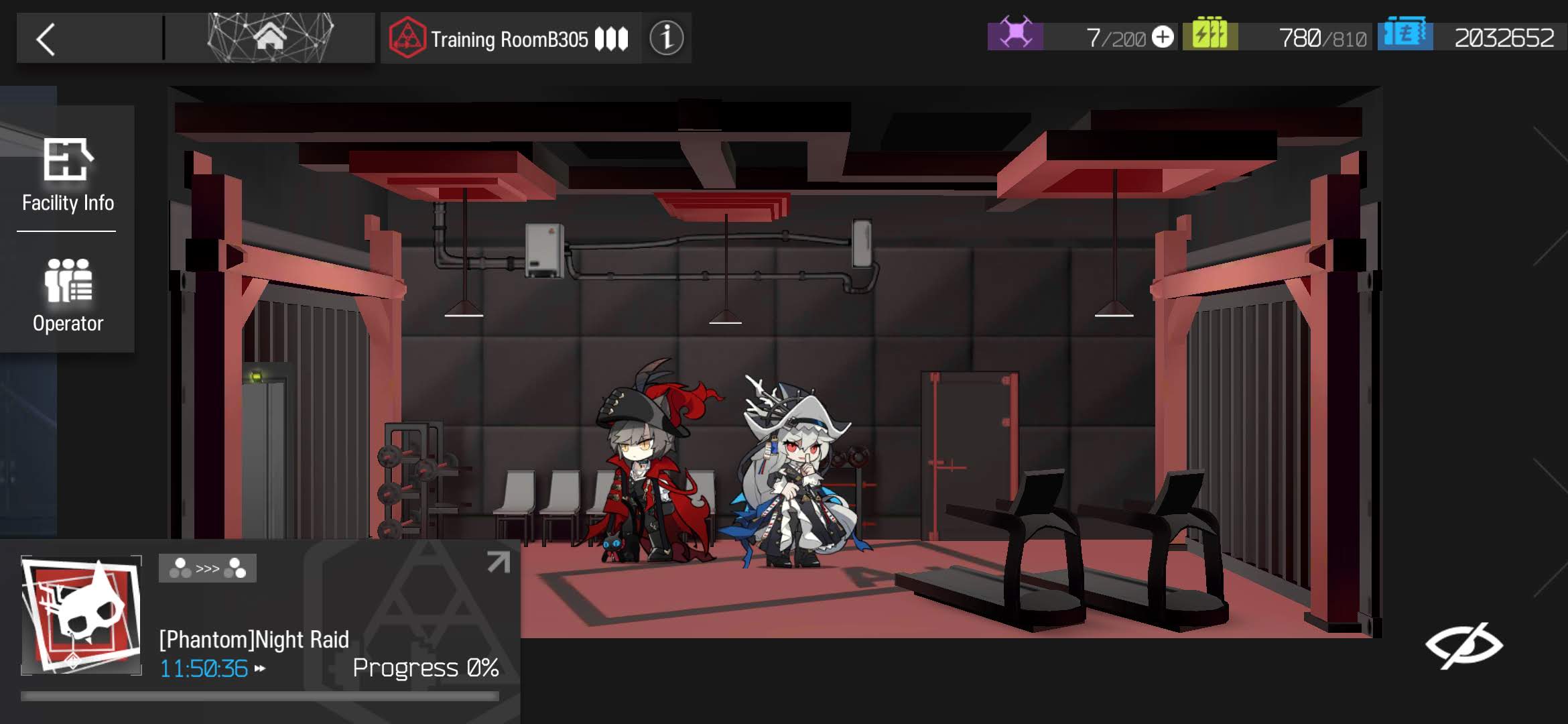

Phantom S3M2 Training ~ click to expand

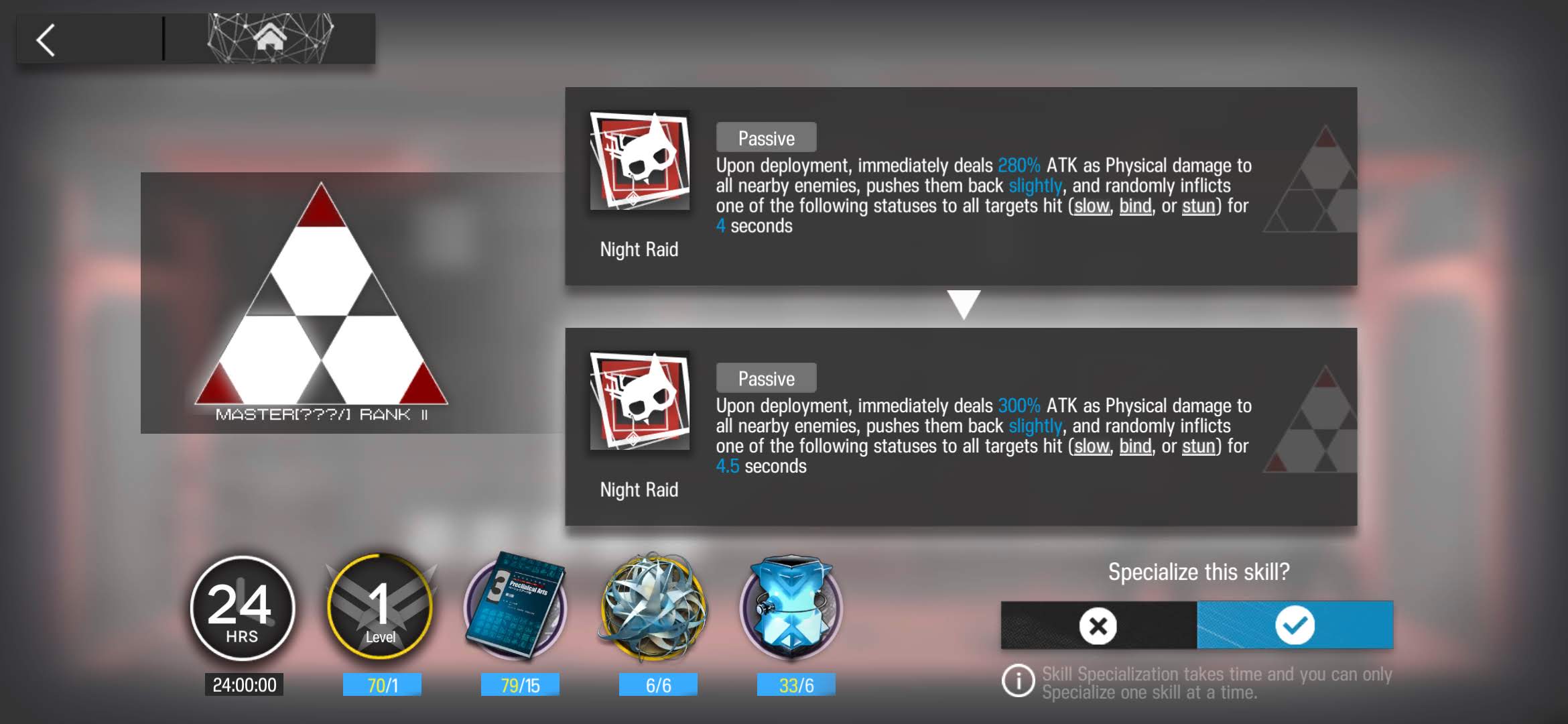

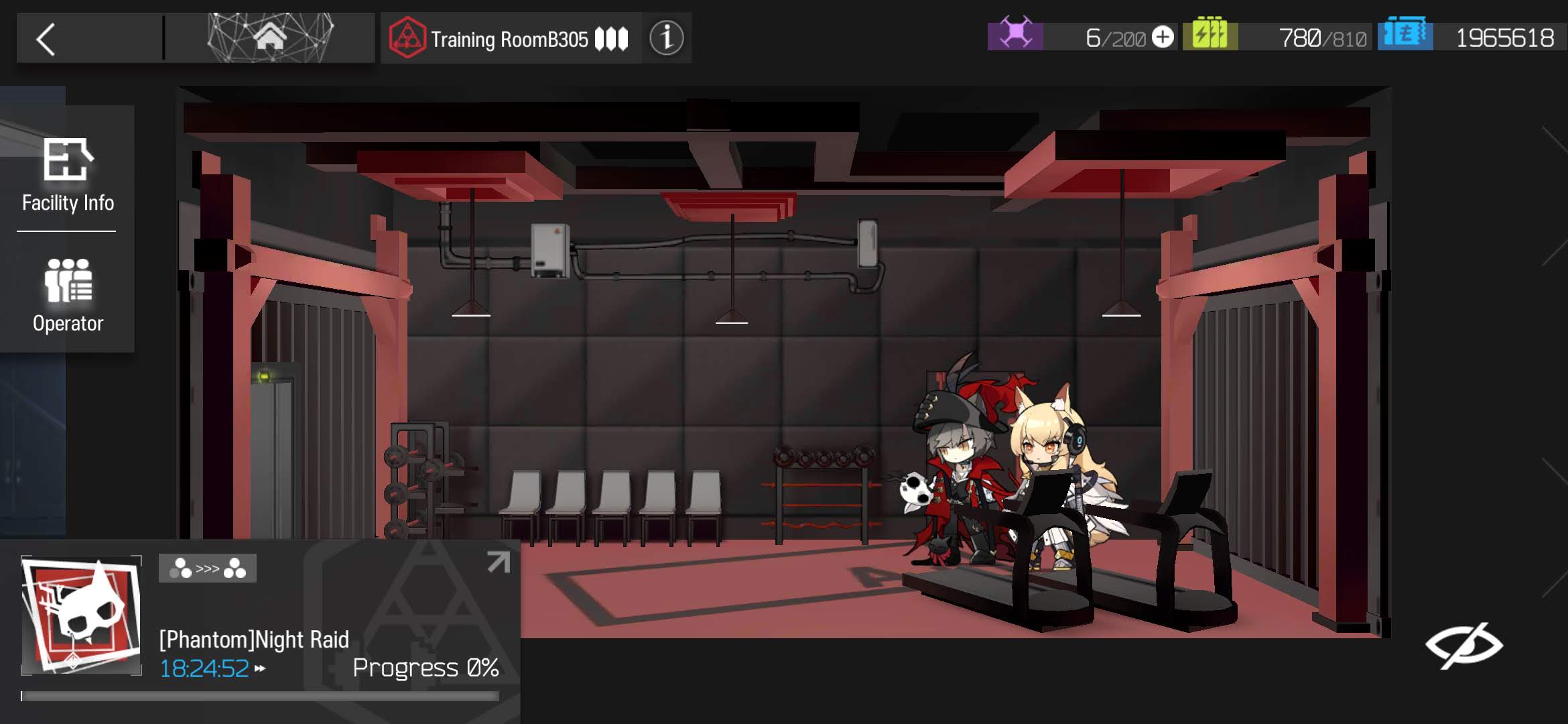

1.6 Phantom S3M3

Phantom S3M3 Training ~ click to expand

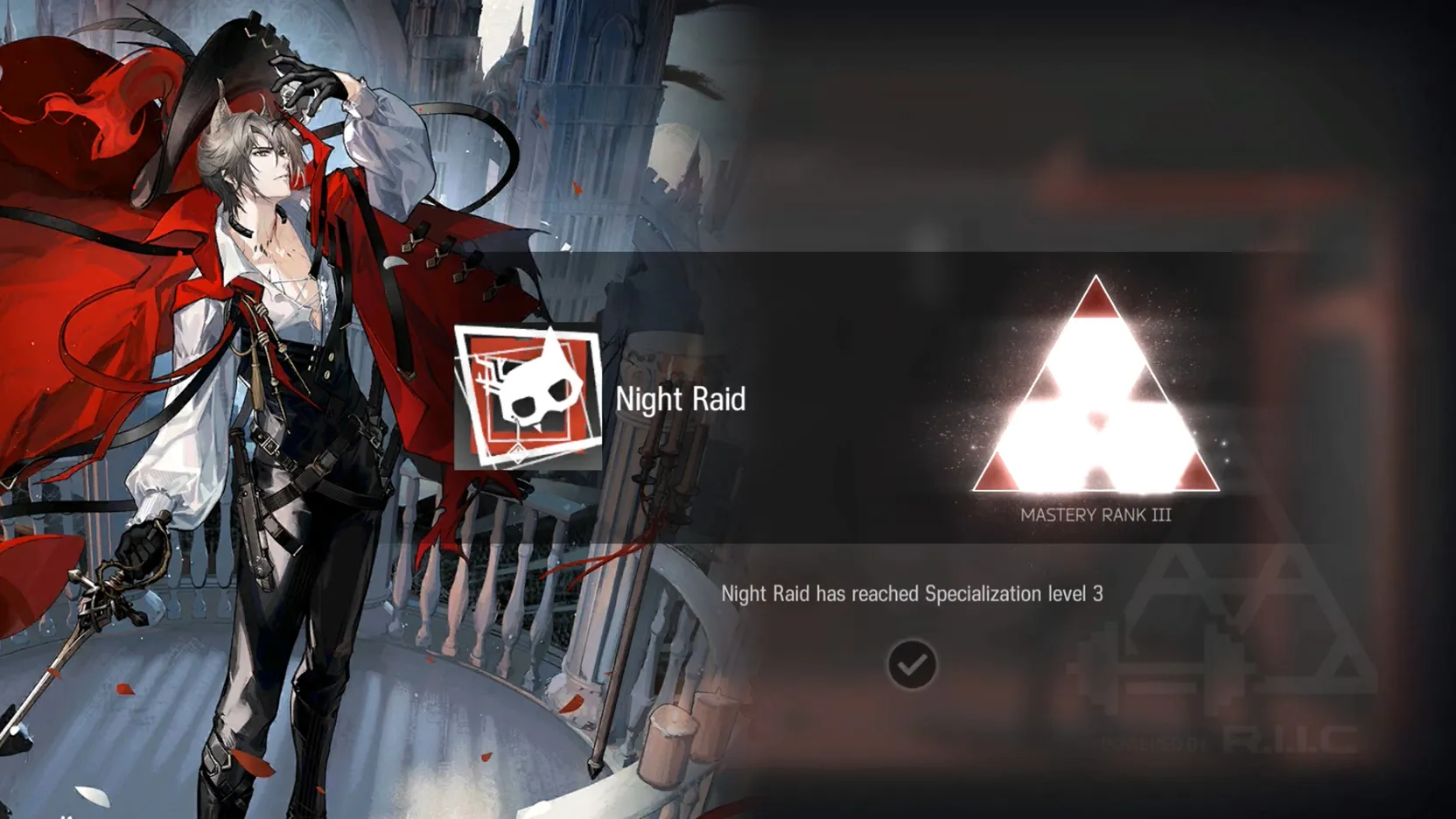

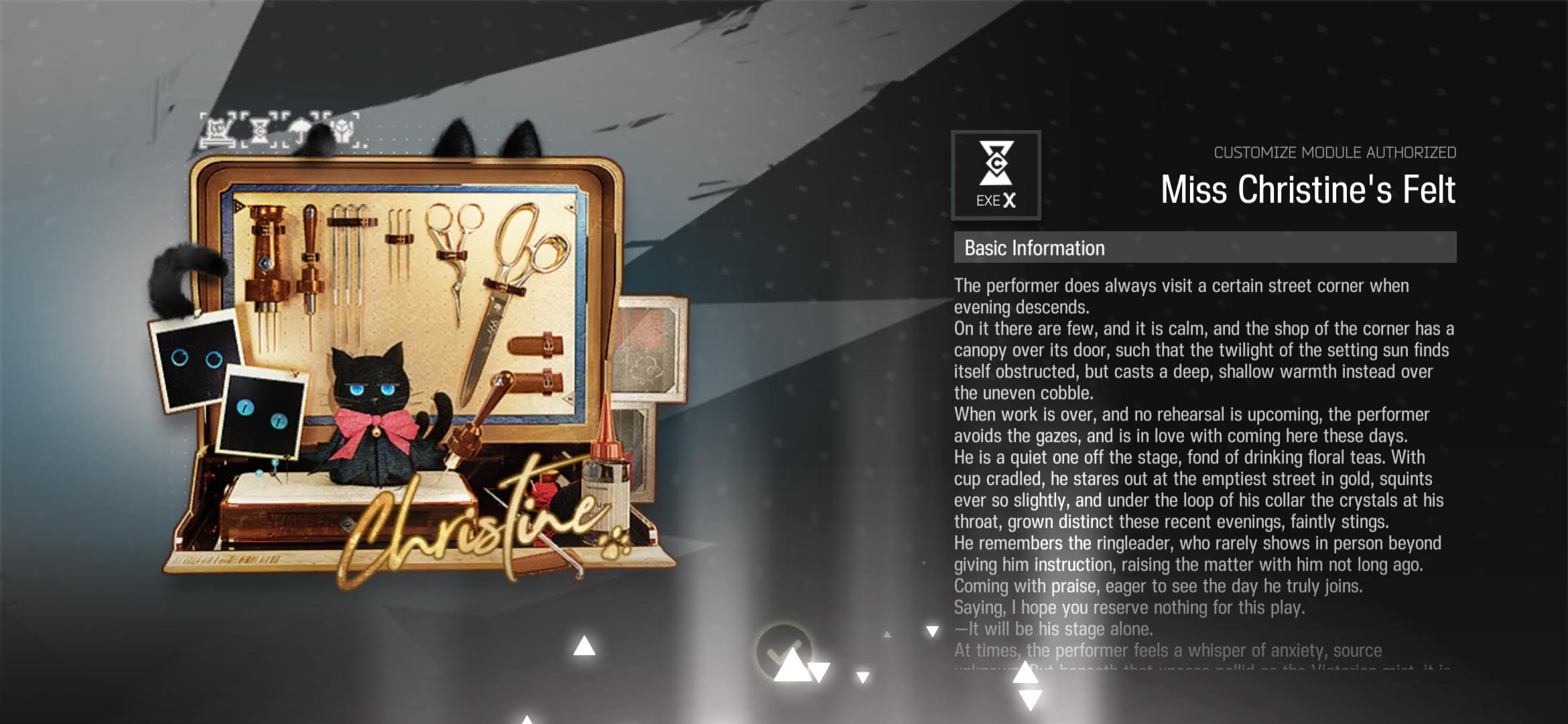

1.7 Phantom Module

1.8 [Gitano] Skin: Unknown Journey

https://gamepress.gg/arknights/skin/unknown-journey

EPOQUE New Arrivals/Unknown Journey. Trendy, made for the spring season, well-fitting and comfortable, comes with a choice of accessories that can be mixed and matched to suit your style.

2 ControlNets

Official implementation of Adding Conditional Control to Text-to-Image Diffusion Models.

ControlNet is a neural network structure to control diffusion models by adding extra conditions.

It copys the weights of neural network blocks into a “locked” copy and a “trainable” copy.

The “trainable” one learns your condition. The “locked” one preserves your model.

Thanks to this, training with small dataset of image pairs will not destroy the production-ready diffusion models.

The “zero convolution” is 1×1 convolution with both weight and bias initialized as zeros.

Before training, all zero convolutions output zeros, and ControlNet will not cause any distortion.

No layer is trained from scratch. You are still fine-tuning. Your original model is safe.

This allows training on small-scale or even personal devices.

This is also friendly to merge/replacement/offsetting of models/weights/blocks/layers.

Ablation Study: Why ControlNets use deep encoder? What if it was lighter? Or even an MLP?

https://github.com/lllyasviel/ControlNet/discussions/188